Quickstart¶

docker run -it -p 8080:8080 iceychris/libreasr:latest

The output looks like this:

make sde &

make sen &

make b

make[1]: Entering directory '/workspace'

python3 -u api-server.py de

make[1]: Entering directory '/workspace'

python3 -u api-bridge.py

make[1]: Entering directory '/workspace'

python3 -u api-server.py en

[api-bridge] running on :8080

[quantization] LM done.

[quantization] LM done.

[LM] loaded.

[LM] loaded.

[quantization] Transducer done.

[Inference] Model and Pipeline set up.

[api-server] gRPC server running on [::]:50052 language de

[quantization] Transducer done.

[Inference] Model and Pipeline set up.

[api-server] gRPC server running on [::]:50051 language en

Head your browser to http://localhost:8080/

Architecture¶

Overview¶

LibreASR is composed of

core and language models

api-server, which is serving models over a gRPC APIapi-bridge, which exposes a WebSocket API for clients to useClient implementations

Features¶

These features are already implemented:

RNN-T network

Dynamic Quantization

english,german

These are on the Roadmap:

french,spanish,italian,multilingualTuned language model fusion

Training¶

This sections contains instructions on how you can train your own models using LibreASR.

Overview¶

RNN-T Model¶

get some audio data with transcriptions (e.g. librispeech, common voice, …)

edit create-asr-dataset.py if you use a custom dataset

process each of your datasets using create-asr-dataset.py, e.g.:

python3 create-asr-dataset.py /data/common-voice-english common-voice --lang en --workers 4

This results in multiple asr-dataset.csv files, which will be used for training.

edit the configuration testing.yaml to point to your data, choose transforms and tweak other settings

adjust and run libreasr.ipynb to start training

watch the training progress in tensorboard

the model with the best validation loss will get saved to

models/model.pth, the model with the best WER ends up inmodels/best_wer.pth

Language Model¶

See this colab notebook or use this notebook.

Inference¶

Deployment¶

Example Apps¶

|

|

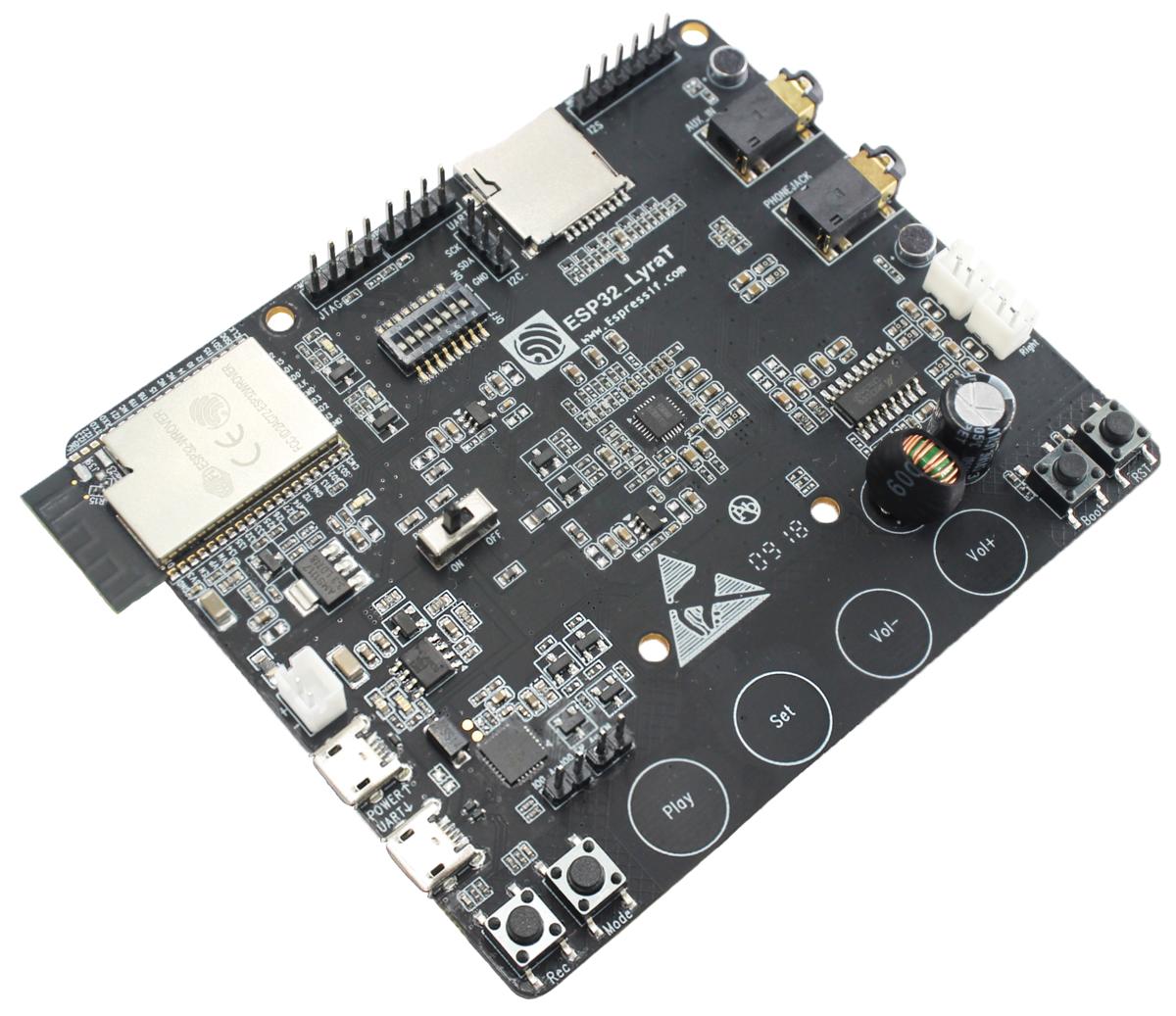

| React Web App | ESP32-LyraT |

Performance¶

| Model | Dataset | Network | Params | CER (dev) | WER (dev) |

|———–|———|————|——–|———–|———–|

| english | 1400h | 6-2-1024 | 70M | 18.9 | 23.8 |

| german | 800h | 6-2-1024 | 70M | 23.2 | 37.6 |

While this is clearly not SotA, training the models for longer

and on multiple GPUs (instead of a single 2080 ti) would yield better results.

See releases for pretrained models.

Contributing¶

Feel free to open an issue, create a pull request and join the Discord.

You may also contribute by training a large model for longer.